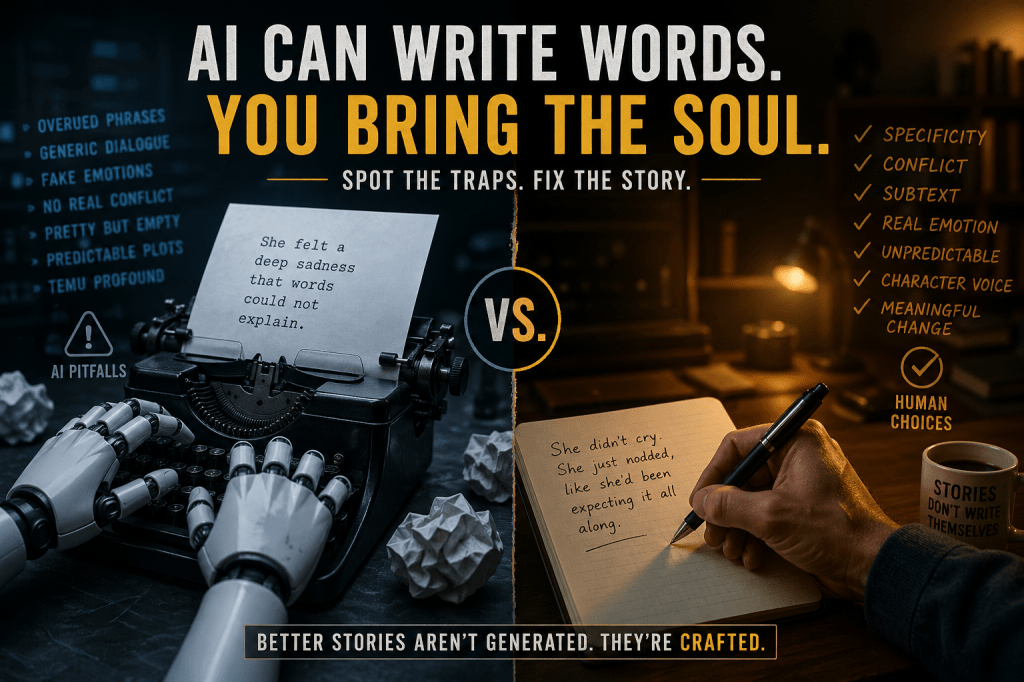

Let’s get something out of the way first. This isn’t one of those “AI writing is ruining fiction” pieces. I’m not here to panic or preach. Honestly, I use AI in my own writing workflow all the time, and it’s been genuinely useful.

But there’s a thing that happens. You read back a chapter you co-wrote with an AI, and something’s off. It’s technically fine. The sentences are clean. The dialogue is coherent. Nothing is embarrassing. And yet it somehow feels like eating a meal that looks great on the plate but has no real flavour. You finish it, and nothing lingers.

That’s not a mystery. It has specific causes, and once you understand them, you can actually fix it.

Here’s the thing that changed how I think about this: AI doesn’t write badly. It writes safely. It produces the most statistically probable version of a good story — which sounds impressive until you realise that the most probable version of something is rarely the most memorable version. Real storytelling lives in the weird corners, the contradictions, the details that don’t quite fit the pattern. AI, left to its own devices, smooths all of that out.

So instead of talking vaguely about “adding your voice,” let’s get into the specific traps and exactly how to counter them.

The Characters Know Themselves Too Well

What AI tends to do

AI characters are emotionally articulate from the first page. They understand their feelings, express them clearly, and speak in polished, considered dialogue. A grieving character will say something poetic about grief. A frightened character will describe their fear in beautiful, precise terms.

Why it happens

Large language models are trained on vast libraries of fiction and internet writing. They’ve absorbed the pattern of emotional scenes — the beats, the vocabulary, the rhythm — but they haven’t absorbed genuine human contradiction. When in doubt, the model defaults to emotional coherence because that’s what most of the training examples looked like.

The fix

Real people don’t have great access to their own inner lives. They deflect, change the subject, get angry when they mean to be vulnerable, crack jokes at completely the wrong moment.

For every major character, try building in:

- A conversational habit or verbal tic

- A blind spot they genuinely can’t see around

- A topic they avoid — not dramatically, just quietly

- At least one emotional contradiction (they say they don’t care; they clearly do)

- Vocabulary that shifts depending on who they’re talking to

A scared character shouldn’t always sound poetic. An angry character shouldn’t always say exactly what they mean. When you introduce those inconsistencies, the character stops feeling like a composite of fictional archetypes and starts feeling like a person.

The Scenes Look Dramatic But Nothing Actually Happens

What AI tends to do

You get scenes that feel cinematic on the surface — tense atmosphere, charged dialogue, a character having a big moment of reflection — but when you step back, nothing has actually changed. No new problem has appeared. No relationship has shifted. The characters have just exchanged information and moved on.

Why it happens

AI is predicting text that resembles a scene from a novel. It’s quite good at that. It’s much less reliable at understanding escalating narrative pressure across dozens of chapters, or ensuring that each scene does real load-bearing work in the story.

The fix

Before you draft any scene — whether you’re writing alone or prompting an AI — ask yourself four questions:

- What does the POV character actually want right now?

- What is actively in their way?

- What is different by the end of the scene?

- What new complication has been introduced?

If you can’t answer those, the scene probably shouldn’t exist yet. This isn’t a rigid formula — some scenes are quieter, more atmospheric, and that’s fine. But even those scenes should shift something, even subtly. A mood. A relationship. A character’s understanding of their situation. If nothing moves, the scene is furniture.

Everything Sounds Vaguely Profound

What AI tends to do

This one is sneaky because it sounds good in the moment. You read a line like:

“Sometimes the darkest prisons are the ones we build ourselves.”

And it feels like something. It has the shape of meaning. But read it again. What is it actually saying? Who is it about? What’s specific about this character, this moment, this story? Nothing. It could slot into ten thousand different novels and fit all of them equally.

Why it happens

AI is heavily optimised — whether through training or human feedback — to produce text that feels emotionally resonant. Generic wisdom hits that mark reliably. It sounds like depth. The trouble is, it’s depth-flavoured rather than actually deep.

The fix

When you catch a line like that, don’t just delete it. Ask what it’s trying to say, and then make it specific.

Instead of “the darkest prisons are the ones we build ourselves,” what if your character thinks about the specific way they kept lying to their sister for three years because admitting the truth felt worse than the lie? That’s the same idea. But it’s theirs. It belongs to this story and no other.

Specificity is the single fastest way to make writing feel human. Generic wisdom feels generated because it essentially is — it’s the average of a thousand similar thoughts. A concrete, messy, specific observation feels like a real person had it.

So What’s the Actual Takeaway?

AI isn’t broken, and it’s not the enemy of good writing. But it naturally drifts toward the safe, the generic, and the statistically reasonable — unless you actively push back against it.

That’s the real job when you’re using AI as a writing tool. Not just prompting it and accepting whatever comes out, but understanding why it makes the choices it makes, and knowing where to step in and pull the work back toward something stranger, more specific, and more alive.

The writer’s job hasn’t changed. It’s still about making choices that feel true. AI just means you now have a very fast, very capable collaborator who needs you to do the human part of that job a little more deliberately.

Which, honestly, isn’t the worst thing.

Leave a comment